I tried an interesting experiment recently… What happens if you take a file in a proprietary format that runs only on a single OS platform, with no published spec, and you hand the plaintext export of that same file to a sophisticated coding agent? Maybe you ask it to generate not only the file spec but also the read/write library in the target programming language of your choice. And then walk away… How do you suppose that ends? Suh-PRISE! You get a rough draft of the spec that generalizes to your sample files, and you get the first iteration of...

Continue reading...GenAI Coding assistant updates

A colleague was asking what models I’ve tested for local GenAI coding, and what might fit on a MBP configured with enough RAM. TL;DR: These are the models I’ve downloaded to the DGX Spark for testing: Also, be aware that ollama out of the box often has a small default context window (global setting) – which is too small to do anything meaningful in a codegen scenario – sample overrides below:

Continue reading...What a Machining Study Tells Us About the Future of Coding with AI Agents

A 2023 paper published in the Journal of Manufacturing Processes set out to answer a seemingly narrow question: how does a machinist’s behavior differ when working on a conventional (manual) milling machine versus a CNC machine? The answer turns out to be surprisingly relevant far beyond the machine shop — particularly for software engineers navigating the rise of AI coding agents. The Study Researchers at Rochester Institute of Technology designed a multi-modal study in which a trained machinist fabricated the same part — a leaf spring shackle — across four consecutive production runs on both conventional and CNC milling machines....

Continue reading...Claude’s take on “The Software Development Lifecycle Is Dead”

I was reading Boris Tane’s “The Software Development Lifecycle Is Dead” and agree with several of his points, but also understand from >30 years’ XP in industry and a couple years working with GenAI and actively researching neurosymbolic AI for the better part of the past decade, I can see where there are some oversimplifications in his post and some bleedover from the GenAI hype. That being said, and considering he calls out Claude Code in a few places as one of the silver bullets to SWE, I thought what better to analyze Boris’ post and provide counterpoints based on...

Continue reading...Gitea self-hosted runners

I just fired up a local instance of Gitea for stuff I don’t want Micro$oft and GitHub training CoPilot on, or want anyone else scraping. However, one challenge with such a setup is running your trusty CI/CD workflows without GitHub’s infrastructure. Docker Compose to the rescue! After some wrong turns and some rubber ducking with Claude, I managed to construct a relatively simple Docker Compose file to keep a minimum number of ephemeral runner replicas up and registered with Gitea without requiring a complex Kubernetes setup. Now I can have my self-hosted Gitea instance run the same CI/CD jobs that...

Continue reading...Self-hosting GenAI code assistants

I’ve been playing with Claude Code for a few months now, and have been very impressed – but sometimes hit the limits of even the Pro Max plan when I’m multitasking. Which got me to thinking… I have a DGX Spark that is idle while not training or fine-tuning LLM models for a side hustle, which can handle pretty large models (albeit not at thousands of token/s generation like commercial offerings). Maybe it would be fun to see how far I can get with self-hosted OSS solutions so I could experiment with things like Ralph and larger projects with BMAD...

Continue reading...Metadata Matters

Current data collection systems are literally drowning in data. Data scientists are trying to figure out how to leverage it. Metadata can help.

Continue reading...Autonomous Baby Steps

It seems like every AI/ML feed I follow, there is yet another company claiming to go from fully-manual to fully-autonomous in a single, giant, disruptive leap. This kind of overhype is doing way more harm than good.

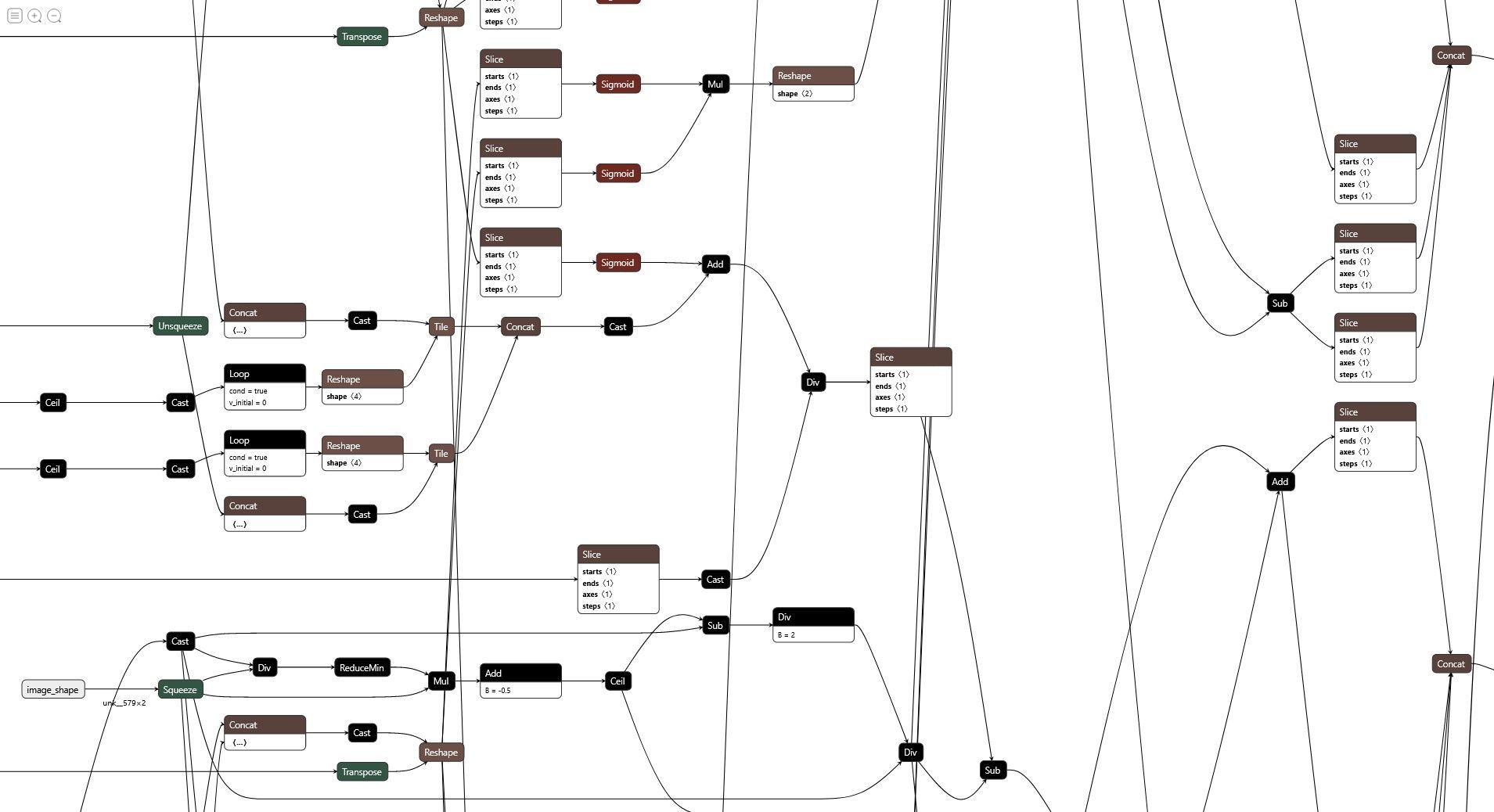

Continue reading...Working with Microsoft’s ONNX Runtime

We’ll explore leveraging the Microsoft ONNX Runtime as a deployment tool in the Java ecosystem for pre-trained ML models.

Continue reading...Natural Language Processing vs. Understanding

By now, almost anyone reading this blog has experienced a conversational agent, or “chatbot” in some form or another. The funny thing is, as impressive as these modern chatbots are, they still have no real concept of understanding.

Continue reading...